Since the release of the 2018 wave of the General Social Survey, the big story has been about the sex drought: rising numbers of Americans, especially young men, are eschewing sex altogether. These data have been puzzled over by the Washington Post, Washington Free Beacon, and the blogger Spotted Toad.

Every time the GSS sex data are discussed there are always questions over accuracy. Like, are people willing to recount their amorous adventures to perfect strangers? I’ve analyzed these data to look at cohort trends in promiscuity, the effects of premarital promiscuity on marital happiness, and the demographics of promiscuous America, so I have a vested interest in their accuracy.

One way to get a sense of the validity of the GSS sex data is to look at different variables measuring celibacy. As far as I can tell, the aforementioned estimates of the sex drought are based on the SEXFREQ variable, which looks like this:

SEXFREQ FREQUENCY OF SEX DURING LAST YEAR

- About how often did you have sex during the last 12 months?

VALUE LABEL

-1 IAP [Question not asked]

0 NOT AT ALL

1 ONCE OR TWICE

2 ONCE A MONTH

3 2-3 TIMES A MONTH

4 WEEKLY

5 2-3 PER WEEK

6 4+ PER WEEK

8 DK [Don’t Know]

9 NA [No Answer]

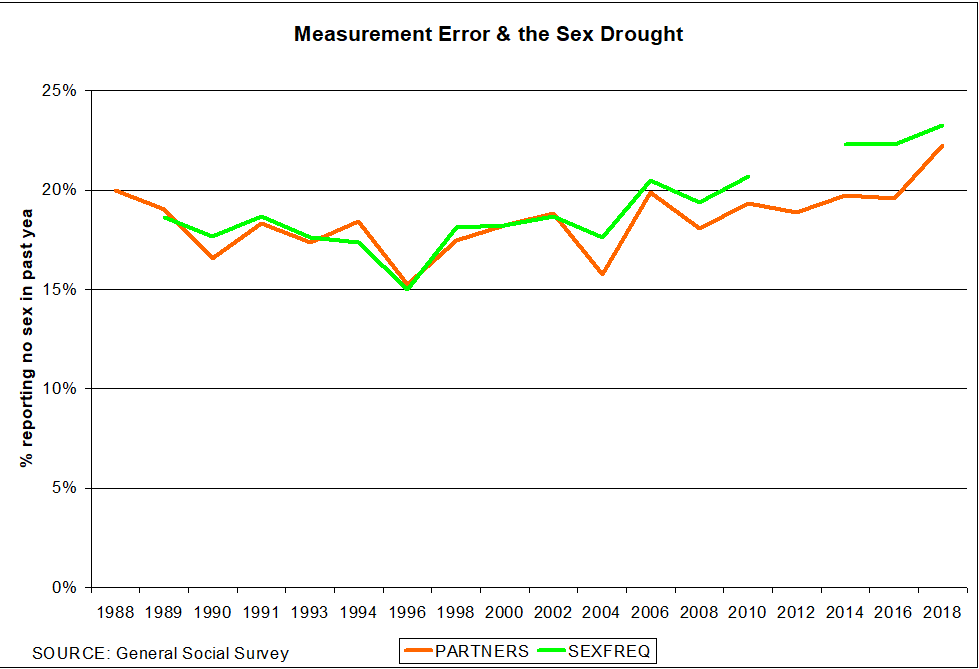

But there’s another option: PARTNERS, which records how many sex partners a respondent has had in the previous 12 months. Our faith in the GSS sex data should increase if the two variables produce the same estimates of the growth in celibate Americans. Here’s what the data look like:

The two variables produce largely similar results. Indeed, PARTNERS seems preferable because it has two more years of data available. PARTNERS was in the 1988 survey, while SEXFREQ didn’t start until 1989. Moreover, the 2012 SEXFREQ data are unusable for measuring abstinence because the base population is different.

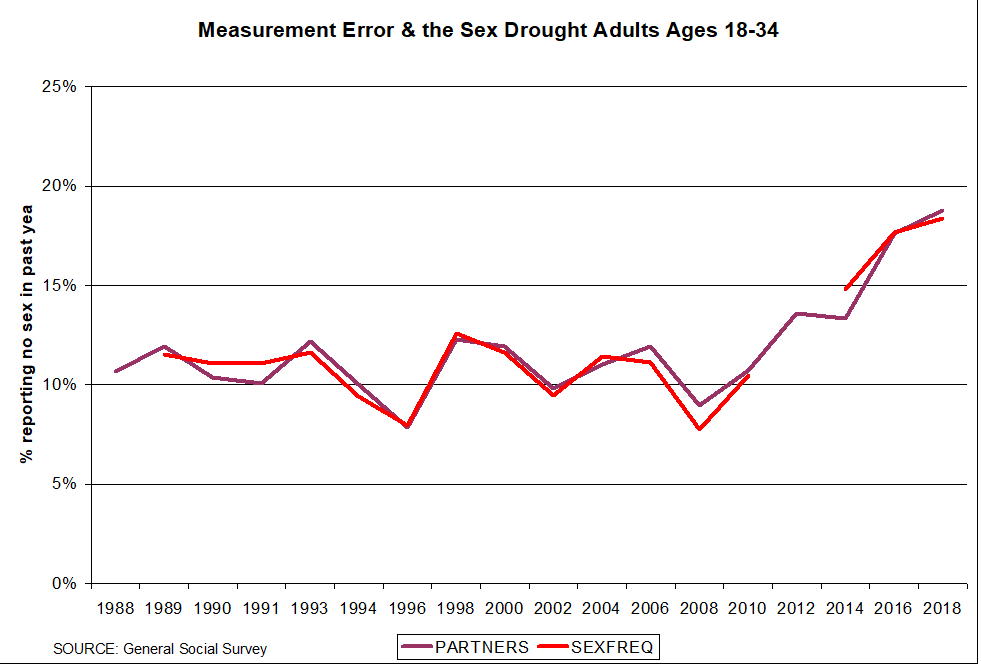

SEXFREQ does seem to under-count celibacy in recent years (alternately, PARTNERS exaggerates it). But the disparity disappears if the analysis is limited to adults ages 18-34, which several writers have suggested is the epicenter of our celibacy epidemic.

Both variables confirm that the rise in celibacy is strongest among younger Americans.

Why does PARTNERS underperform when looking at Americans of all ages, but not younger adults? It probably has to do with measurement error. I’ve noted elsewhere that 7 percent of married women and 9 percent of married men claim zero lifetime sexual partners. Presumably, these respondents misinterpreted the survey question as inquiring about former sex partners. Presumably also this misinterpretation is less likely among unmarried survey respondents—and Americans in the 18-34 age range are less likely to be married in recent years. If this hunch is correct, it would explain why the PARTNERS variable only under-performs SEXFREQ in the analysis of American adults of all ages.

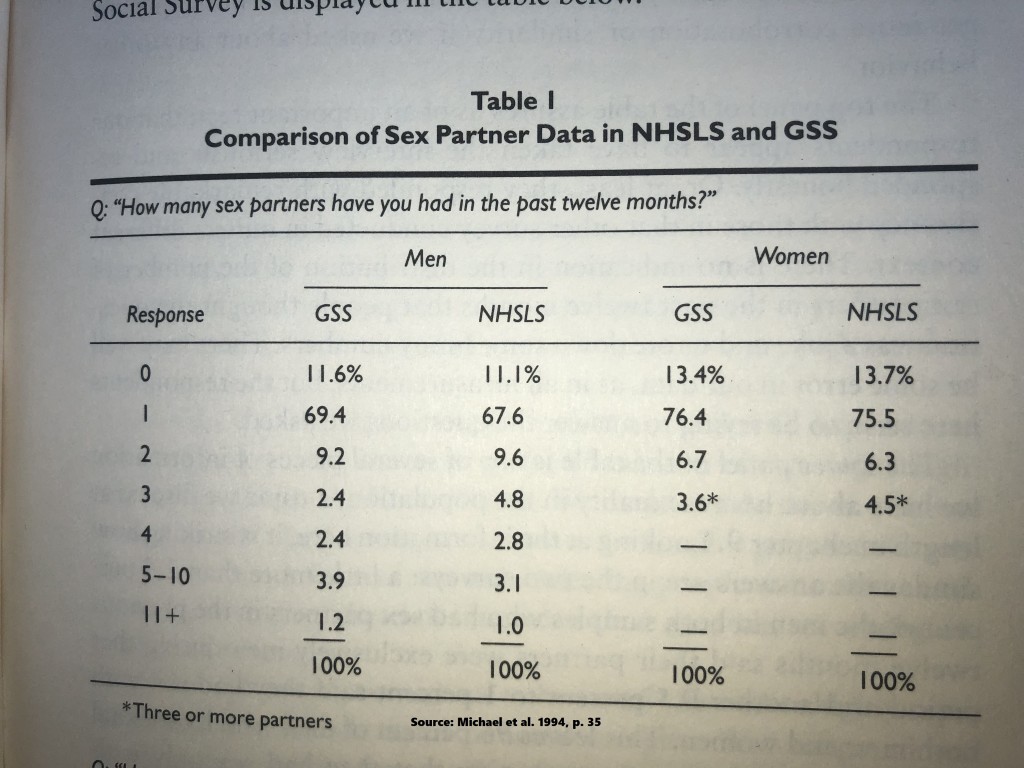

But you don’t have to believe me on the accuracy of the GSS sex data. Take it from sociologist Edward Laumann and his colleagues, who in the 1990s collected the highest quality national data on sex ever. They went to unusual lengths to ensure accurate measurement, then compared their data to the General Social Survey (p. 33). Here’s how their data stacked up against the GSS.

As is apparent, the GSS sex data fare well against Laumann’s NHSLS (Indeed, they’re also consistent with my analysis of the National Survey of Family Growth.) Maybe recall bias has changed a bit since the early 1990s, but I doubt it. There will always be some measurement error in sex data, but the GSS data seem to be holding their own.

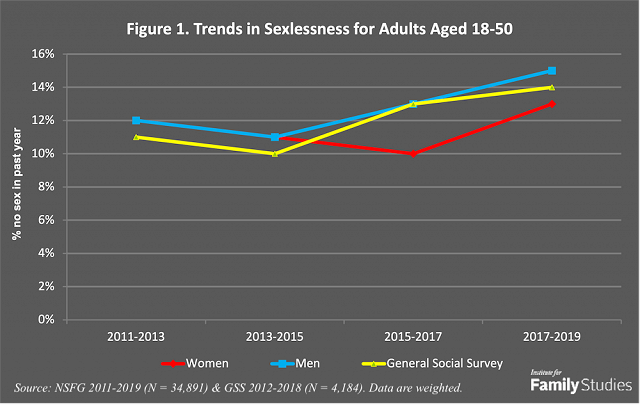

Subsequent to writing this post I conducted an analysis of celibacy using both the GSS and the National Survey of Family Growth in a post for Institute for Family Studies. The two surveys produced similar results. So if there is bias, it’s probably endemic to all survey data on sex.

Addendum: one challenge to the GSS sex data–and by extension, sex data from other surveys–has been the big data analysis of Seth Stephens-Davidowitz. It’s worthwhile to quote him at length from a 2017 interview in the Atlantic:

They have a lot less sex than they say they do. The way I studied this is I looked at condom data. The General Social Survey asks people how frequently they have sex, whether it’s heterosexual or homosexual sex, and whether they use a condom. You do the math. Heterosexual women say they use 1.1 billion condoms every year in heterosexual sex. Men say they use 1.6 billion condoms in heterosexual sex, but you know that someone’s lying. So who’s lying?

Only 600 million condoms are sold every year in the United States. Some of them [are used by] gay men and some of them thrown out. They’re exaggerating how frequently they use a condom. This doesn’t mean that they are lying about how frequently they have sex. They may just be lying about how frequently they use protection when they do have sex, but if you look at how frequently American women of fertility age say they have sex without using any contraception, if they really were having that much unprotected sex, there would be more pregnancies every year in the United States. I think everybody in surveys exaggerates how frequently they have sex, because in today’s culture there is a lot of pressure to have a lot of sex and to not admit if you’re having not that much sex. For both men and women, there is a pressure to exaggerate.

Seth Stephens-Davidowitz does interesting work, and he’s definitely on to something: I don’t dispute various reporting biases in the GSS and other sex surveys. Nonetheless, it’s hard to see how these biases would systemically differ across most of the variables social scientists study. There is indeed measurement error, but it’s more global than selective. And, of course, big data has its own set of attendant biases.

Pingback: Which Religious Groups Have the Most Sex? | R-bloggers